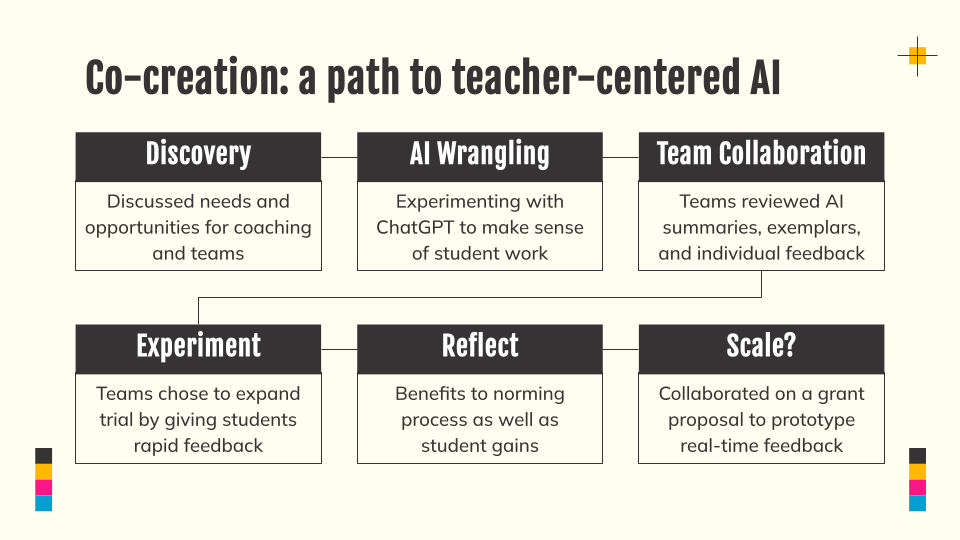

Last week, we hosted a Coaching ScienceLast week, we hosted a small Coaching Science Lab with educators, instructional coaches, and district leaders to explore a simple, open-ended question:

What becomes possible when we use everyday AI tools to look more closely at student thinking—without changing who’s in charge of instruction?

Experiences from the classroom

Val shared how she used student drawings and explanations from the OpenSciEd Grade 8 Sound Waves unit to better understand student thinking, generate feedback more efficiently, and decide what to teach next.

Val’s classroom workflow:

- student work as the starting point

- generated feedback in the form of strengths and questions to push learner forward

- AI support enabled her to provide feedback that directly referenced their work, and to do it a lot faster than would have been possible otherwise

Students loved specific, concrete feedback.

Using everyday AI tools on purpose

For the hands-on, we practiced student feedback using off-the-shelf AI tools like ChatGPT, Claude, and Gemini to show how you can get started without any special tools.

Participants could see exactly:

- how to prompt the AI with student instructions, reviewer instructions, and student work

- what the AI produced

- how to chat with the AI to go deeper

This demystified the AI and showed how it can be used for really helpful analysis on real student work.

The real leverage wasn’t the tool.

It was the thinking around:

- what counts as evidence in student work

- what feedback actually helps students improve

- what patterns matter for coaching and planning

AI simply helped us move through that thinking faster.

Teacher judgment stays at the center

A strong theme throughout the session was role clarity.

Participants resonated with an approach where:

- teachers review and revise all feedback

- tone and instructional intent remain human

- AI suggestions are visible, editable, and contextual

- patterns support coaching conversations rather than replace them

The framing that stuck was simple:

Teachers hope to engage students in deeper, more feedback.

AI helps teachers do that work more often, with less friction.

An invitation, not a conclusion

Participants in the Coaching Science Lab session showed creativity and curiosity, encouraging us to create more opportunities for shared practice around real student work.

We’re excited to continue learning and doing together.

Want to explore this yourself?

If you’re curious to try this approach in your own context, we’ve shared two lightweight entry points:

- A one-page guide that outlines the basic workflow and design principles

→ How to: Generate instant CER student feedback with ChatGPT, Claude, or Gemini - A sample AI prompt you can use or adapt with your own student work

→ CER Feedback AI Prompt Example

This is a great a place to start exploring.

We’re continuing to host small Coaching Science Labs as spaces to test ideas, learn from real classrooms, and figure out what responsible, useful AI support can look like in practice.

If you’re interested in joining a future session—or just trying this on your own—we’d love to learn alongside you. Browse or subscribe to our calendar to join us for upcoming events.